Professional Data Engineer on Google Cloud Platform: PROFESSIONAL-DATA-ENGINEER

Want to pass your Professional Data Engineer on Google Cloud Platform PROFESSIONAL-DATA-ENGINEER exam in the very first attempt? Try Pass2lead! It is equally effective for both starters and IT professionals.

- Vendor: Google

- Exam Code: PROFESSIONAL-DATA-ENGINEER

- Exam Name: Professional Data Engineer on Google Cloud Platform

- Certifications: Google Certifications

- Total Questions: 331 Q&As

- Updated on: Apr 18, 2024

- Note: Product instant download. Please sign in and click My account to download your product.

- Q&As Identical to the VCE Product

- Windows, Mac, Linux, Mobile Phone

- Printable PDF without Watermark

- Instant Download Access

- Download Free PDF Demo

- Includes 365 Days of Free Updates

VCE

- Q&As Identical to the PDF Product

- Windows Only

- Simulates a Real Exam Environment

- Review Test History and Performance

- Instant Download Access

- Includes 365 Days of Free Updates

Passing Certification Exams Made Easy

Everything you need prepare and quickly pass the tough certification exams the first time

- 99.5% pass rate

- 7 Years experience

- 7000+ IT Exam Q&As

- 70000+ satisfied customers

- 365 days Free Update

- 3 days of preparation before your test

- 100% Safe shopping experience

- 24/7 Support

Google PROFESSIONAL-DATA-ENGINEER Last Month Results

Free PROFESSIONAL-DATA-ENGINEER Exam Questions in PDF Format

Related Google Certifications Exams

PROFESSIONAL-DATA-ENGINEER Online Practice Questions and Answers

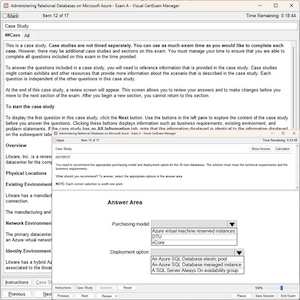

Questions 1

You operate a database that stores stock trades and an application that retrieves average stock price for a given company over an adjustable window of time. The data is stored in Cloud Bigtable where the datetime of the stock trade is the beginning of the row key. Your application has thousands of concurrent users, and you notice that performance is starting to degrade as more stocks are added. What should you do to improve the performance of your application?

A. Change the row key syntax in your Cloud Bigtable table to begin with the stock symbol.

B. Change the row key syntax in your Cloud Bigtable table to begin with a random number per second.

C. Change the data pipeline to use BigQuery for storing stock trades, and update your application.

D. Use Cloud Dataflow to write summary of each day's stock trades to an Avro file on Cloud Storage. Update your application to read from Cloud Storage and Cloud Bigtable to compute the responses.

Questions 2

You are using Google BigQuery as your data warehouse. Your users report that the following simple query is running very slowly, no matter when they run the query:

SELECT country, state, city FROM [myproject:mydataset.mytable] GROUP BY country

You check the query plan for the query and see the following output in the Read section of Stage:1:

What is the most likely cause of the delay for this query?

A. Users are running too many concurrent queries in the system

B. The [myproject:mydataset.mytable] table has too many partitions

C. Either the state or the city columns in the [myproject:mydataset.mytable] table have too many NULL values

D. Most rows in the [myproject:mydataset.mytable] table have the same value in the country column, causing data skew

Questions 3

You've migrated a Hadoop job from an on-prem cluster to dataproc and GCS. Your Spark job is a complicated analytical workload that consists of many shuffling operations and initial data are parquet files (on average 200-400 MB size each).

You see some degradation in performance after the migration to Dataproc, so you'd like to optimize for it. You need to keep in mind that your organization is very cost-sensitive, so you'd like to continue using Dataproc on preemptibles (with 2

non-preemptible workers only) for this workload.

What should you do?

A. Increase the size of your parquet files to ensure them to be 1 GB minimum.

B. Switch to TFRecords formats (appr. 200MB per file) instead of parquet files.

C. Switch from HDDs to SSDs, copy initial data from GCS to HDFS, run the Spark job and copy results back to GCS.

D. Switch from HDDs to SSDs, override the preemptible VMs configuration to increase the boot disk size.

Reviews

-

This dumps is valid, and this dumps is the only study material i used for this exam. Surprisingly i met the same question in the exam, so i passed the exam without doubt. Thanks for this dumps and i will recommend it to my friends.

-

My good friend introduced this material to me. It really useful and convenient. I just prepared the exam by using this material and achieved high score than others. So I'm very happy. Thanks my friend and this material.

-

the content update quickly, there are many new questions in this dumps. thanks very much.

-

There are many new questions in the dumps and the answers are accurate and correct. I finished my exam with high score this morning, thanks very much.

-

Thanks for the help of this dumps, i achieved the full score in the exam. I will share this dumps with my good friends.

-

thanks for the advice. I passed my exam today! All the questions are from your dumps. Great job.

-

when i seat for exam, i found that some answers are in different order in the real exam.so you can trust this dumps.

-

I appreciated this dumps not only because it helped me pass the exam, but also because I learned much knowledge and skills. Thanks very much.

-

Just Passed with 9xx, piece of advice. memorize the dumps inside out but still be careful, some questions are tweaked as in options differ and your answers will be different. read the question before answering!!!!

-

About 3 questions are different, but the remaining is ok for pass. I passed successfully.

Printable PDF

Printable PDF